RDF on Hadoop and Schema on Read vs. Schema on Write

One of the challenges for any Big Data solution is dealing with scale, and RDF stores are no exception: going for billions of RDF triples (the equivalent of rows in the SQL world) is not trivial. Hadoop on the other hand is great at scaling out on commodity hardware, which is a feature every MPP (massively parallel processing) database would like to have.

I have been wondering for a while how come nobody has tried to combine the strengths of the 2 approaches to build what i called Big Linked Data: Hadoop infrastructure, able to scale out gracefully, and a SPARQL interface to access data. In this post we examine the merits of a solution that combines Hadoop with RDF and take a look at a case study – SPARQL City.

SQL and SPARQL leverages schema on write, Hadoop leverages schema on read

RDF stores are essentially column stores, and they have their own solutions for dealing with scale and in particular for storing triples. But using Hadoop as the infrastructure to host RDF triples has more benefits than “outsourcing” the hard work of building a column store from scratch.

Hadoop is built on HDFS (its distributed filesystem), offering a whole stack of services on top of it. Flat files are reduntantly stored in HDFS, and they can be accessed or processed using tools such as Pig and Oozie (processing and workflow), HBase or Hive (data access via key-store operations or SQL).

This is exactly the premise that SPARQL City builds on. Instead of using Hive-SQL to access data, SPARQL City offers a SPARQL interface to access data stored in HDFS. In addition, it also supports the same functionality for data stored in other back-ends – SQL and NoSQL stores. This offers a number of advantages, but also raises some questions.

The advantages:

- SPARQL is ideal for performing operations on graphs as it is a graph query language

- SPARQLis standardized, thus offering portability

- SPARQL offers the option for federated querying, thus enabling simultaneous access to a number of back ends

Since the advantages were clear, my conversation with the SPARQL City team focused on the questions:

a. How does it all work, how is the SPARQL engine connected to HDFS? In SPARQL City’s product outline they refer to HDFS as their native store and Hadoop, SQL and NoSQL stores as alternatives they can connect to. Why make this distinction between HDFS and Hadoop, and what layers / versions of Hadoop version do they connect to (1.0 vs 2.0 – HDFS – YARN – other)?

The main concern here was whether the connection relied on Hadoop 1.0 – MapReduce infrastructure, in which case performance would be an issue. As the MapReduce infrastructure was designed for batch jobs, its performance on interactive queries is lackluster. SPARQL City connects to HDFS via its own proprietary layer, bypassing MapReduce. It leverages an in-memory compression layer for this, thus promising very fast access.

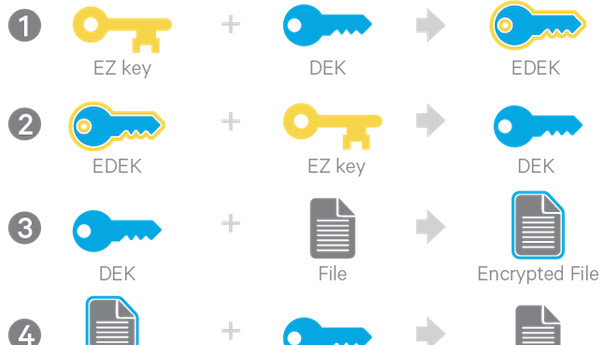

b. How does the Hadoop schema-on-read approach play with the RDF schema-on-write approach? SPARQL City’s product outline mentions that “The underlying RDF data model does not require a schema”, which sounds like a contradiction in terms.

RDF data have structure, and they comply to a schema on which clients rely on to access the data. This schema is known and enforced in advance (schema-on-write). Data stored in HDFS on the other hand are stored in flat files, and even though there can be some sort of schmera governing them too, it is typically implicit – think spreadsheet files and logs for example. Clients interpret the data at will (schema-on-read).

There are some tools around that allow accessing non-RDF data as RDF, but they demand mapping from the source files to an RDF schema in order to work. SPARQL City connects to flat files and leverages a reflection mechanism that auto-maps them to an RDF schema.

This is somewhat controversial, as one of the features of RDF data that is completely ignored here is the existence of a pool of carefully crafted schemas for a number of domains. This is very atypical and puts SPARQL City in a different league from other RDF stores out there. Not giving users the option to map their data to the schema of their choice seems a bit odd.

However, this is a design choice and certainly the ability to map to schemas of choice could be implemented. Of course, that means there is no inference as well, and SPARQL is only used as the query language of choice here.

This is a very unique proposition, and one that sits in the middle between RDF stores and Hadoop infrastructure. There is also academic work that uses Hadoop to process RDF data, but this is different for a number of reasons and relies on the MapReduce framework, making it inappropriate for interactive queries. SPARQL City mentioned they are still developing their product (SPARQLVerse), so it’s worth keeping an eye on them to see which way they they will be headed.