In-memory computing: Where fast data meets big data

The evolution of memory technology means we may be about to witness the next wave in computing and storage paradigms. If Hadoop disrupted by making it easy to utilize pooled commodity hardware with spare compute and storage, could the next disruption come from doing the same for spare compute and memory?

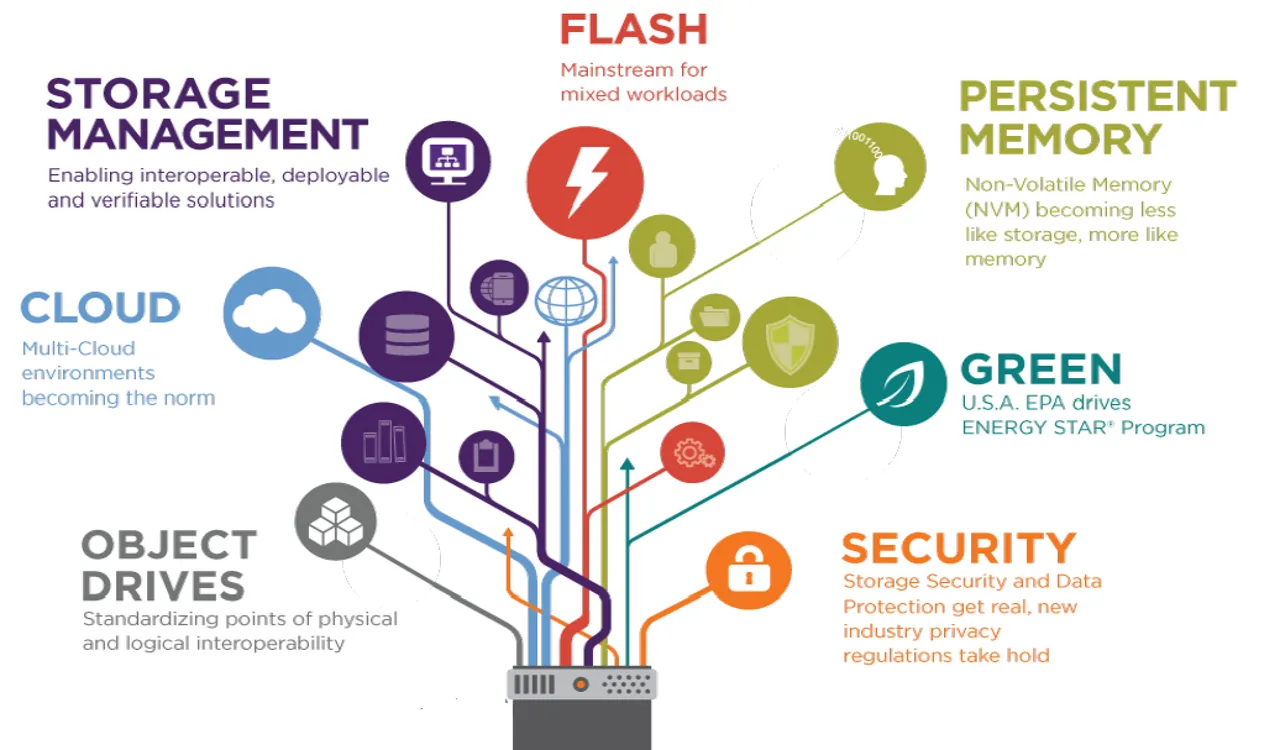

Traditionally, databases and big data software have been built mirroring the realities of hardware: memory is fast, transient and expensive, disk is slow, permanent and cheap. But as hardware is changing, software is following suit, giving rise to a range of solutions focusing on in-memory architectures.

The ability to have everything done in memory is appealing, as it bears the promise of massive speedup in operations. However, there are also challenges related to designing new architectures that make the most of memory availability. There is also a wide range of approaches to in-memory computing (IMC).

Some of these approaches were discussed this June in Amsterdam, at the In Memory Computing Summit EMEA. The event featured sessions from vendors, practitioners and executives and offered an interesting snapshot of this space. As in-memory architectures are becoming increasingly adopted, we’ll be increasingly covering it, kicking off with IMC Summit organizers: GridGain.

First off, IMC is not new. Over time caching has been increasingly used to speed up data-related operations. However as memory technology evolves and the big data mantra is spreading, some new twists have been added: memory-first and HTAP.