What is the right balance between innovation and regulation in AI? Long views on AI, Part 1

What AI model maker would take the risk to make any powerful model if they could be responsible for anything someone might possibly do with it?

This question is posed by Dan Jeffries – author, futurist, engineer, and systems architect. I’ve had the pleasure of conversing with him a couple of times. First about the AI Infrastructure Alliance which he leads and then about Generative AI. I’ve noticed some of Jeffries’ recent shares about OpenAI, AI doomers and upcoming regulation, most notably the EU AI Act.

I’ve covered the EU AI Act, and generally consider it and its tiered approach a step in the right direction. I also thought that introducing the notion of general purpose AI systems powered by foundation models made sense. As far as i understood, uses of the system may or may not be regulated, depending on how they are categorized.

The EU AI Act effect: Background, blind spots, opportunities and roadmap

In addition, there are specific requirements for foundation models, including for things such as data governance and compute. That means foundation models can be evaluated, and will probably converge towards compliance.

In this post, Jeffries makes some good points about fearmongering and innovation. He also lays out a simple framework for regulation, which looks reasonable. It certainly made me think long and hard about finding the right balance between innovation and regulation in AI.

How can we find the right balance between innovation and regulation in AI?

I’ve laid out what i believe are some important questions to ask here are, along with answers i’ve arrived at.

1. If foundation models can be used in a number of settings, including in ones that would be regulated if dedicated models were developed for them, does it make sense to regulate foundation models too?

Probably yes, and we don’t need to include AI doomerism in this conversation.

Let’s take surveillance for example. I picked the example on purpose, because you’d think surveillance would be regulated. It is not, and that’s a good point Jeffries makes – it probably should be.

If surveillance is regulated, and foundation models can be used for that or any other regulated application, i don’t see why they should be exempt from regulation.

However, there is lots of nuance here in defining “use”. See next point.

2. What is the threshold that distinguishes a foundation model from a non-foundation one?

Capabilities, but it’s not straightforward at all.

I’ve seen ideas like using the amount of compute used to train models as a proxy for capabilities being floated, which i don’t think will work.

If a model can be *directly* used to power regulated applications such as surveillance, then it should be subject to the same regulation dedicated models are. The key word here is *directly*.

A surveillance system contains many components, software and hardware. From cameras to databases and image recognition algorithms. None of these should or could be regulated in isolation. But if a model does have image recognition capabilities and can *directly* connect to a camera and a database and automate surveillance, then it makes sense to regulate it.

Emphasis on *automate* here. See next point.

3. If foundation models are to be regulated, what type of oversight should be applied to them and how will it be enforced?

High-risk systems as defined in the EU AI Act seems about right.

There’s a syllogism to unpack here. The EU AI Act originally adopted the definition proposed by the OECD wrt to what it applies to:

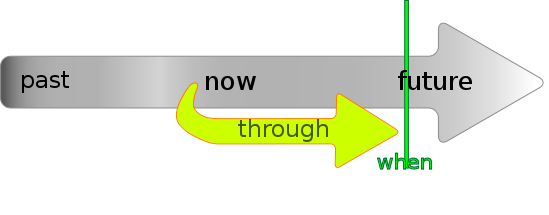

“The OECD defines an Artificial Intelligence (AI) System as a machine-based system that can, for a given set of human-defined objectives, make predictions, recommendations, or decisions influencing real or virtual environments”.

The OECD has issued a revised definition, but the emphasis remains on decisions. That is the whole point of specifically regulating AI systems – the automation part. A camera or a database can be used by people to make decisions. An AI system can make decisions autonomously.

In the EU AI Act, AI systems are classified into 4 categories according to the perceived risk they pose: Unacceptable risk systems are banned entirely (although some exceptions apply), high-risk systems are subject to rules of traceability, transparency and robustness, low-risk systems require transparency on the part of the supplier, and minimal risk systems for which no requirements are set.

Requiring traceability, transparency and robustness from foundation models seems reasonable. Stanford researchers have evaluated foundation model providers like OpenAI and Google for their compliance with the EU AI Act, so we have an idea of what this would look like in practice.

It does not look like asking foundation model developers to provide this information would impose excessive overhead or stifle competition. But it does look like having them comply with these requirements would create a level playing field and benefit everyone using them directly or indirectly.

4. Should there be a distinction between regulating big tech models vs. open source models?

Probably not, counter-intuitive as it may seem.

First, because it’s hard to draw a line. Case in point – does OpenAI count as big tech, for example?

Second, because big tech, open source and innovation are not mutually exclusive. Case in point – Meta, IBM and the newly announced AI Alliance.

Third, because open source is also a nuanced concept. Case in point – Llama and AI model weights.

Stanford researchers evaluate foundation model providers like OpenAI and Google for their compliance with proposed EU law on AI.The question of whether governments should regulate open foundation models and how to do so is the key to finding the right balance between innovation and regulation in AI. Experts from industry, academia, and government convened to outline a path forward at a Princeton-Stanford workshop on How to Promote Responsible Open Foundation Models.

This is the first in the Long Views on AI series of short posts about important questions posed by people who think deep and far about technology and its impact, focusing on AI in particular. The idea is to introduce a question, and tease some answers as provided and/or inspired by the person who posed the question. Feedback welcome.