Data Modeling for APIs. Part 5: Modeling vs. Meta-Modeling

Recently i was involved the creation of a data model for a project in the Energy domain. As this was an international, multi-partner project with many stakeholders and respective components, a dillema emerged for debate: to model, or to meta-model? We use this occassion as an example to mention the pros and cons for each choice, and how they can be combined. But first, some background on the project itself.

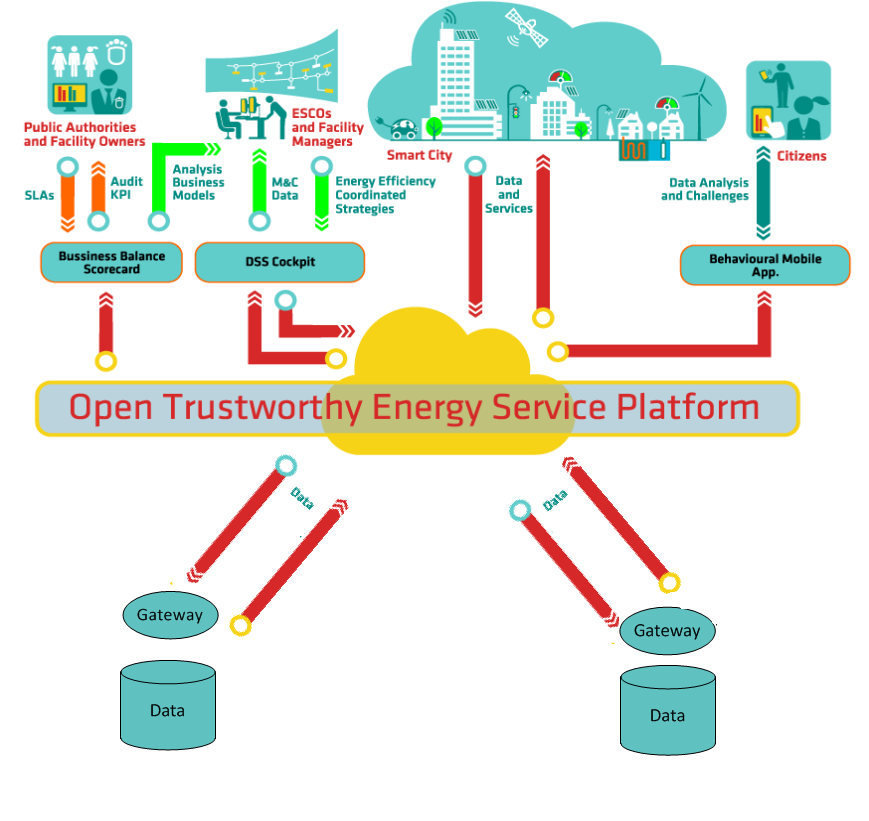

The main premise of the project is to create infrastructure to deliver services and applications for Smart Cities. More specifically, the project involves a number of Gateways collecting and delivering Smart Grid data, a Middleware layer aggregating and exposing that data via APIs and a services layer delivering end user applications on top of the data. In order to make everyone’s life easier, existing components were reused as much as possible. The Middleware layer was based on existing work from a previous project in the Energy domain, and this is where the debate began.

The task of this Middleware layer is two-fold: on the one hand, to integrate data coming from different Gateway sources, and on the other to offer Services to the application layer. The Middleware implementation uses a meta-model for this, which basically consists of 3 simple classes: Entity, Attribute and Message.

Meta-models: deceivingly simple to create, painfully complex to use. Source: Eclipse project

The reason for this minimalistic approach is simple and clear: as each Gateway to be integrated has its own data model, it makes for an abstraction over multiple data models, unifying them all under this basic meta model. This is a very pragmatic approach: if a data model was introduced at this level, it would mean having to map each Gateway data model to the Middleware data model for each new Gateway, which would make Gateway integration a cumbersome and error prone task.

On the other hand, this makes for a nightmare for application developers: if everything is abstracted by this meta-model, application developers have to inspect each Entity they receive to figure out what it is and how it is connected to other Entities in the system. This is practically the equivalent of having to learn the data model for each Gateway, which is something that the Middleware is supposed to abstract. In addition, it would be practically impossible to do things such as generating clients for the Middleware and performing data type checks, as everything would have to be done on the application side.

There was a debate on the topic, weighing the pros and cons for each option: simplicity for Gateway integration versus simplicity for application development. In the end, simplicity for application development prevailed, as after all the success of the project in the long run depends on it: it would be pretty hard for a healthy application ecosystem to be developed on top of this Middleware if it only supported a meta model.

So the meta model will still be used internally to integrate Gateways, but on the upper layer the API exposed to application developers will use a proper data model. The existing meta model will be mapped to the data model, created by extending a standard.

The IEC 61968 data model: complex to read. but specific. Source: Smart Distribution Wiki

In order to make things easier, a standard in the Energy domain (IEC 61970, 61968 – Common Information Model) was adopted as the basis for the data model. Concepts, attributes and relationships from the Common Information Model were adopted – extended – combined to produce a data model that is standardized, well-understood and documented.

Of course, the proof of the pudding is in the eating, so it remains to be seen how well this data model will serve the needs of application development. On the other hand, there will be some work required to map the existing meta-model to the new data model, but this should be relatively straightforward.

Part 1: Seting the stage | Part 2: REST and JSON | Part 3: SOAP and XML | Part 4: Linked Data and SPARQL | Part 5: Modeling vs. Meta-Modeling