DataStax Enterprise Graph 6.0: what’s new, and what’s coming?

DataStax Enterprise just released version 6.0, a major upgrade. We take an insider tour with Jonathan Lacefield, Senior Director of Product Management with DataStax, focusing on DSE Graph.

Even though news on the new DSE release have been out for a while, one of the principles to go by in the tech world is “never miss a chance to talk to core engineers”.

Lacefield has been with DataStax since 2013. He started out as a Solution Architect and has moved to Product Management for DSE Graph for the last couple of years, so just the person to talk to if you’re interested in Graph.

DSE got its Graph through the acqui-hiring of Aurelius in 2015. Aurelius was Marko Rodriguez and Stephen Malette’s firm and has been responsible for Titan, the open source distributed graph database from which DSE Graph and Janusgraph sprung out, as well as Tinkerpop, the open source framework for graph processing.

Expectedly, it took some time to integrate (re-implement, essentially) Titan in DataStax. So the question now is “what’s new in DSE Graph 6.0”. There are some words on that in DSE 6.0 Release Notes, but the chat with Lacefield did shed a lot of light on things you won’t easily find there.

First off, it should be clear that when you get DSE, you get all of it, not just DSE Graph. That may seem like a trivial point to make, but it’s important to note. Unlike other graph databases that are purely focused on this model, DSE is more of a multi-model database like Cosmos DB, Orient DB or Arango DB.

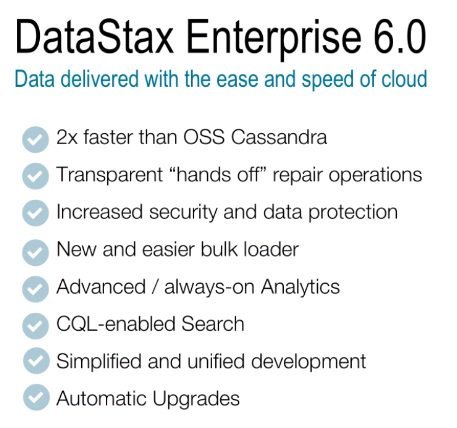

As such, Lacefield argued, the changes introduced in DSE 6.0 affect Graph as well. Those changes have already been discussed by my ZDNet co-contributor Andrew Brust, but there has also been some Graph-specific improvements, mostly in analytics and search.

One of the horizontal changes was in the compute and storage layers. As Lacefield pointed out, the last couple of years new hardware and cloud architectures have challenged the existing architecture of Cassandra that DSE was based on. So what the DSE team has done it to evolve its architecture to something they call Advanced Performance.

What this means is that they redesigned the compute and storage layers to a. be more lightweight and b. utilize multi-core hardware architecture, improving performance (up to 200% as measured internally as per Lacefield) and enabling users to use less physical nodes to get the same results.

The second major change was the way eventual consistency is handled. In DSE 6.0, a feature called NodeSync was added. As Lacefield said, NodeSync is a low-cost protocol that hands off anti-entropy and ensures data is consistent. By default, NodeSync always runs in the background in every node, and validates data is in sync on all replicas.

These improvements help lower both infrastructure cost and complexity, as having to manage less nodes, as well as simplifying management overhead are welcome additions. That also cross-cuts to Graph, as it brings better throughput in graph traversals and lower latency for operational applications. But that’s not all.

A summary of DSE 6.0 new features

Analytics was another key point Lacefield emphasized. DSE comes with Solr and Spark integrated in its platform, and that means data does not have to be moved around to do analytics and search. This is a key feature, and specifically for Graph there is something called Graph Frames that builds on Spark’s Data Frames and enables users to perform complex graph processing at scale on DSE via Spark.

There is an interesting side-effect of using DSE Graph via Spark: SQL. Lacefield explained that users can now do SQL on Graph data, which is sort of counter intuitive. The way this works is by flattening all Graph data in 2 tables (or Data Frames), one for nodes and one for edges, with all properties stored in those tables too. Except if things were that easy, there would be no need for graph databases.

There are good reasons why this does not work at scale. It means queries will get very complex to write, and very slow to execute. Lacefield knows this, however he says that’s meant as a way to give users unified access for simple use cases across all data stored in DSE via JDBC, for example through BI tools. For more complex scenarios, Lacefield says users can create the equivalent of views – custom Data Frames on Spark.

That may address to some extent the complexity issue: it’s not that SQL would be less complex in that scenario, it’s just that the complexity would be hidden behind a view. As for the performance issue, Lacefield points out that they have implemented Tinkerpop’s Gremlin on Spark. What this means is that queries will be executed via graph traversals, rather than joins.

As for DSE’s roadmap, Lacefield said they will continue to walk down the multi-model path, aiming to come closer to a store once, query anywhere vision. Currently, this is only possible for analytics, via the use of Spark which enables users to mix and match across data stored in Cassandra’s model and data stored as a Graph, but not for operational data.

DSE 6.0 introduces some Graph new features too, among which is SQL access

There are a couple of broader points to be made here.

One, on the relationship with Cassandra and its community. As you may know, DataStax has been the commercial entity that builds on Cassandra and takes it to market as DSE. In the last couple of years DSE took a step back from actively leading the community, but still contributes about 85% of Cassandra’s code as per Lacefield. That works both ways, as code contributed by the community is tested, hardened and eventually adopted by DataStax too.

Two, on operational Graph applications. Lacefield noted there is a growing number of DSE users who choose to use Graph for those. Some transition their applications from previous DSE implementations as they see Graph matches their data model better, others build new applications from scratch using Graph. And that is despite the penalty in performance compared to DSE’s base data model, as Graph offers features (mainly traversal) that Cassandra’s model does not have.

Cassandra has also been used as the back-end for Titan’s successor, JanusGraph, and that warrants some additional analysis; more on that to follow.

Would you like to receive the latest news on graph databases in your inbox? Easy – just signup for Year of the Graph Newsletter below. Have some news you think should be featured in an upcoming newsletter? Easy too – drop me a line here.

[optin-cat id=”1131″]