The Engineer in the Machine: How Neo Is Rewriting What It Means to Build AI

A fully autonomous machine learning engineering agent. A benchmark that matters. And a question that cuts deeper than the hype: when a machine does the work, what happens to the learning part?

The race to automate software engineering is expanding to new territory: machine learning. Neo is a fully autonomous machine learning engineering agent that handles the entire pipeline from problem statement to deployed model.

Built by Gaurav and Saurabh Vij, Neo topped the MLE-Bench leaderboard and compressed a six-month production effort into one week. The harder question Neo raises – whether agentic AI accelerates learning or hollows it out – remains open.

Table of Contents

- Two Brothers and a Problem That Wouldn’t Let Go

- Meet Neo: The Machine Learning Kaggle Grandmaster

- From the Lab to the Loading Dock

- Inside the Loop: How Neo Thinks, and When It Asks for Help

- Taming Hallucinations: Multi-Agent Architecture as Error Correction

- The Tragedy of the Cognitive Commons: Does AI Hollow Out the Craft?

- What Comes Next: Kaggle Grandmaster as a Service

Two Brothers and a Problem That Wouldn’t Let Go

Gaurav and Saurabh Vij are brothers who have co-founded three startups together. That fact alone places them in a category most people only read about.

What’s more telling than the number is the thread running through all three: a persistent attention to minimizing human effort on work that shouldn’t require a human at all.

Gaurav spent the better part of a decade building and deploying machine learning systems: computer vision, fine-tuned large language models, inference pipelines at scale. Saurabh did his doctoral work in high-energy particle physics, contributing to experiments at CERN, including the collaboration that confirmed the existence of the Higgs boson – what physicists call the god particle.

Along the way, Saurabh noticed something that would shape everything that came after: even at the world’s most prestigious physics labs, researchers were spending the majority of their time writing code rather than doing physics.

“At some point,” Saurabh recalls, “I was writing code every day, and it became 90% of my work.” He wasn’t alone. Across astronomy, chemistry, biology, the pattern repeated: researchers with deep knowledge, intuition and questions, were being consumed by the infrastructure of machine learning. Cleaning datasets, setting up GPUs, debugging pipelines – months of grinding before a single scientific hypothesis could be tested.

Gaurav and Saurabh’s second company, Monster API, gave them the clearest view of the problem. The platform offered developers fine-tuning and deployment in a few clicks – no-code machine learning at scale. Thousands of developers used it. And most of them, it turned out, couldn’t build the models they wanted.

Not because they lacked intelligence or ambition, but because the path from raw data to a working model involves too many interdependent specialized decisions. You need to know how to preprocess the data. How to choose the architecture. How to evaluate what you’ve built. How to debug what you haven’t.

That was the gap. And it was large enough to build a company around.

Neo, Gaurav and Saurabh’s third venture, is their answer: a fully autonomous machine learning engineering agent, designed to handle the entire pipeline from problem statement to deployed model, with a human available to steer, question, and override, but not required to drive.

Meet Neo: The Machine Learning Kaggle Grandmaster

The AI landscape is thick with claims. Every week brings another product announcement, another benchmark, another assertion of superhuman capability. Most of it dissolves under close inspection. So when Neo’s team decided to validate their system publicly, they chose their ground carefully.

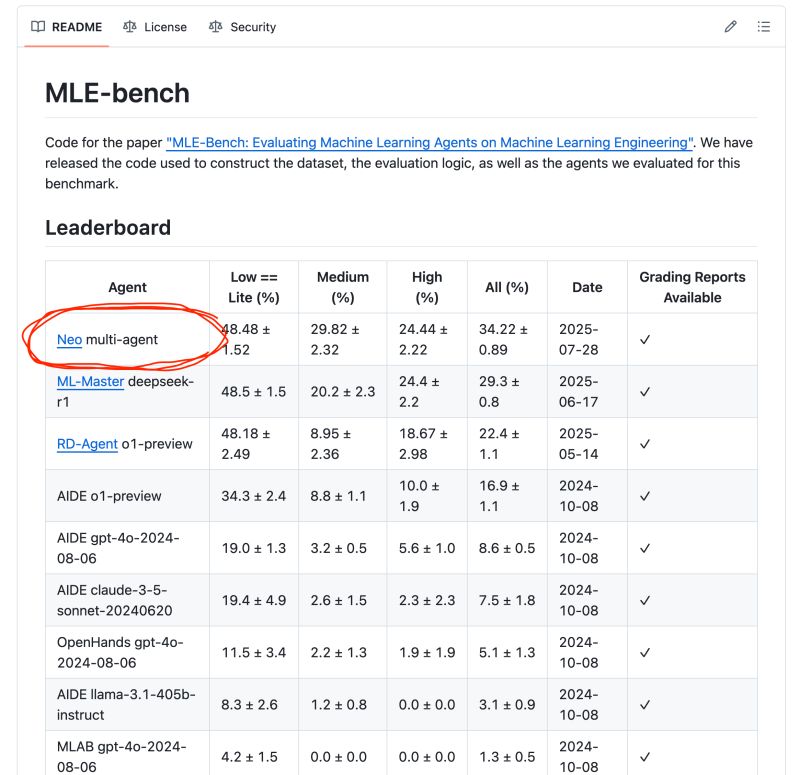

MLE-Bench was created by OpenAI specifically to evaluate agents on machine learning engineering tasks. It consists of 75 Kaggle competitions spanning the full range of machine learning problem types: text classification, image classification, tabular data, sequence-to-sequence tasks.

An agent runs each competition 3 times, for a total of 225 runs. The score is an average. The complexity tiers (light, medium, and high) are designed to separate systems that can handle routine tasks from those capable of genuine problem-solving.

To understand what the benchmark is measuring, it helps to know what the Kaggle ecosystem actually represents. There are roughly 300.000 machine learning engineers in the world. Of those, around 5.000 to 6.000 have achieved master status. Kaggle Grandmasters – people who consistently compete at the highest level – number around 500 globally.

These are specialists who command extraordinary salaries and, as Saurabh notes with a certain dry candor, “don’t want to work for a lot of companies. They want to work for SpaceX, NASA, Uber. Not a four-person startup.”

Neo achieved a medal (bronze, silver, or gold, by Kaggle standards) in 34.2% of MLE-Bench competitions, fully autonomously, without human intervention. When Neo launched on the leaderboard, it ranked first among all submitted agents.

What that number represents in practice is a system capable of reading a problem statement, selecting from available architectures, running experiments, evaluating them, pivoting based on what it learns, and assembling a final solution.

“Neo is reasoning through ensembling strategies, stacking models together, exploring various opportunities, and choosing a path as an outcome or insight after doing certain experiments”, as Gaurav describes it.

The Vij brothers are not stopping at historical training data either. Neo is also designed to scan arXiv continuously, keeping pace with new techniques as they’re published.

From the Lab to the Loading Dock

Neo comes with some solid credentials. But the real world is always messier – the constraints more awkward, the data less clean. So the more interesting question is what Neo does when it encounters operational data from a real-world business problem.

One of Neo’s early design partners was a shipment-tracking company – “Google Maps for shipments” as per Saurabh – processing three to four billion shipments annually. Their core machine learning challenge was ETA prediction: given historical shipping data, build a model that can accurately estimate when a delivery will arrive.

The acceptable error margin in the industry is 2 hours. On shipments with a 48 to 72 hour window, that’s a demanding target. Their existing team had spent 6 months building a model that hit that 2-hour threshold. It was a genuine engineering achievement, and it involved multiple people.

Then they handed the problem to Neo, operated by a single team member: a junior machine learning engineer, relatively new to the field. One week later, the model’s error margin was down to 90 minutes.

For a company managing billions of shipments, a 30-minute improvement in ETA accuracy cascades across customer experience, logistics planning, and operational efficiency. But what stands out isn’t the improvement in accuracy – it’s the compression of time and human resources. Saurabh estimates this as a 20-fold reduction in the effort required to reach the result.

The ETA case study shows that the gap between benchmark performance and real-world utility, which is usually wide, may be narrower than usual. The reasons for that have to do with how Neo handles the messiness.

Inside the Loop: How Neo Thinks, and When It Asks for Help

One of the more instructive design decisions in Neo is the human-in-the-loop mode. The system doesn’t pause after every action to request approval. But it also doesn’t silently barrel through an entire pipeline only to surface a result at the end.

Neo pauses at the boundaries between major phases. Dataset preprocessing complete: would you like to proceed, or adjust? Experiments run, top models selected: here’s the comparative analysis – your call.

The interaction itself happens through a chat interface, and the granularity of control is genuinely fine-grained. Gaurav walks through a concrete example: building a content moderation model to detect abusive language.

There is no column in the dataset labeled “abusive.” Neo constructs that feature from natural language strings, autonomously. But a human can intervene: add a feature for hate speech specifically. Combine features. Evaluate on F1 score rather than raw accuracy. Try this ensembling strategy, not that one. Don’t use GPU for this step, do use it for that one.

Pragmatic AI Training

Learn the fundamentals of AI with Pragmatic AI.

The result is something closer to collaboration than delegation. Neo is doing the heavy lifting – training models, running evaluations, managing the environment – while the human contributes judgment and domain knowledge where these matter.

This distinction between the macroscopic view (the overall architecture, the pipeline, the flow between agents) and the microscopic view (the artifacts, the code, the interdependencies within a single step) is something Gaurav and Saurabh return to repeatedly as a design principle. It’s what allows a non-expert to build something meaningful with Neo while still understanding what they’ve built, and why.

Taming Hallucinations: Multi-Agent Architecture as Error Correction

Any serious discussion of agentic systems has to reckon with hallucination and error propagation. In a long pipeline where each step depends on the previous one, a small error early compounds aggressively. Get the data preprocessing wrong and you’ve poisoned everything downstream.

Neo’s approach to this problem involves two overlapping strategies, both of which Gaurav and Saurabh describe carefully without fully disclosing as a patent filing is in progress.

The first strategy is narrowing scope through fine-tuning. A model that has been fine-tuned for a specific subtask hallucinates less on that subtask than a general-purpose model would.

Neo’s architecture combines proprietary fine-tuned models that perform better in certain machine learning-specific domains with commercial foundation models that carry advantages in reasoning and general coding. Each agent is given a narrower brief than a general-purpose model would receive.

Neo can operate autonomously, but it also leverages the human in the loop principle

The second strategy is systemic error correction through multi-agent orchestration. Different agents in the pipeline evaluate each other’s outputs. One agent acts as a judge for another, catching hallucinations at the source before they propagate.

Saurabh is careful to frame this accurately: “At the micro level, LLMs are going to hallucinate. But at the macro level, you can remove a lot of the errors created by hallucination”. The number he offers is striking: a reduction from roughly 80% hallucination rate on a bare model to 11% – 12% at the system level.

The problem of maintaining consistent outputs when switching between models, which happens automatically when one fails, is addressed through what Gaurav calls their context transfer protocol, combined with what he characterizes as context engineering.

Context engineering is about ensuring that when the baton passes from one model to another, the new model has everything it needs to maintain quality and direction. “Making sure [models] can produce similar outcomes,” Gaurav says, “is hard. That’s what we’ve worked on internally.”

Neo treats hallucination not as a model-level problem to be solved once, but as a systems-level problem to be continuously managed.

The Tragedy of the Cognitive Commons: Does AI Hollow Out the Craft?

It seems inevitable: just like software engineering, machine learning is being transformed. What can we expect to happen to these crafts, as engineers move away from the trenches and more towards managing agents and doing quality assurance? And what does that foreshadow for other crafts?

This is not a hypothetical concern. A recent working paper by economists Daron Acemoglu, Dingwen Kong, and Asuman Ozdaglar, published through NBER and circulating under the title “AI, Human Cognition and Knowledge Collapse”, argues that agentic AI is structurally positioned to erode the collective knowledge base on which it depends.

The mechanism is subtle but devastating: human beings produce general knowledge and context-specific knowledge jointly. When you debug a pipeline, you’re not just fixing this pipeline – you’re deepening your understanding of how pipelines fail in general. Strip out the debugging, and you get the fix but not the learning.

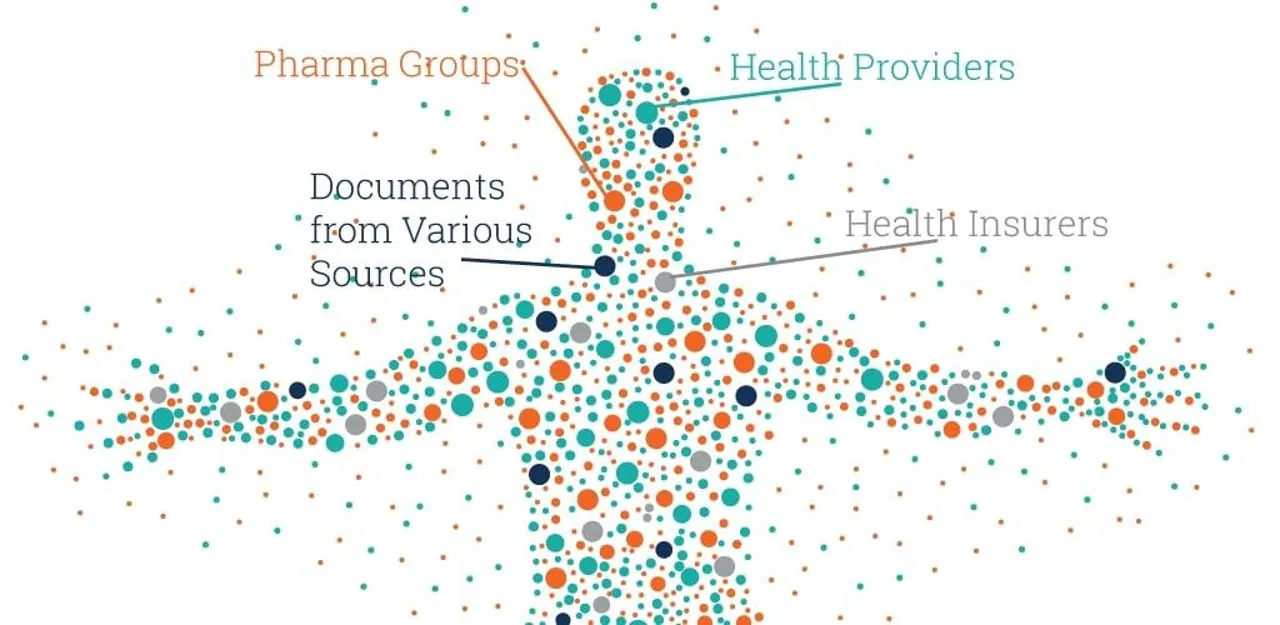

Do that at scale, across a profession, over years, and the shared understanding that makes the AI’s recommendations meaningful in the first place begins to quietly degrade. This is the tragedy of the cognitive commons: the smartest AI could produce the dumbest society, not through any dramatic failure, but through a rational, invisible erosion of the expertise that makes intelligent use of AI possible.

The Tragedy of the Cognitive Commons. Source: The Structural Lens

The Vij brothers push back on this framing. They don’t claim the concern is wrong. They claim Neo has been designed specifically to resist it.

Saurabh’s response is personal. He learned to code during his physics career, doing work he didn’t particularly enjoy. He credits that foundation with giving him the ability to understand what Neo is doing under the hood, to interrogate its choices, to ask meaningful questions about the difference between LoRA and QLoRA and DORA and DPO.

“I didn’t enjoy coding,” he says, “but it actually helped me today.” He is not arguing that foundations don’t matter. He’s arguing that Neo is a better environment for building foundations than trial-and-error alone, because the transparency is built in.

Gaurav extends this into a design principle: the microscopic view is not an optional feature. It’s structural. The idea is that Neo users can see exactly what the system did, why it chose one architecture over another, what the data issues were, and how the models compared. The evaluation reports aren’t summaries. They’re readable accounts of decisions made.

Whether this holds at scale, whether the learning actually transfers or whether it becomes a kind of comfortable illusion of understanding, is an empirical question that no one has yet answered with data. But the design intent is to make the scaffolding visible rather than invisible, to resist the substitution trap by keeping the human in a position where understanding is possible, not merely optional.

What Comes Next: Kaggle Grandmaster as a Service

Neo is currently in waitlisted access, deliberately released in batches. Saurabh explains the reasoning in terms that reveal a company with more patience than most: “Before we go out for more marketing or raising money, we want to make sure we have engaged users using Neo on a day-to-day basis. Once we hit our milestones, we’ll go out and raise.”

That’s not common in an era when AI startups routinely raise nine-figure rounds before their products have found a stable user base. It suggests either unusual discipline or an unusual confidence in the product’s trajectory – probably both.

The roadmap has two major axes. The first is capability depth: improving Neo’s ability to handle increasingly complex tasks, with MLE-Bench scores as the public measure of progress. The second is integration breadth: MCP tooling to connect Neo to the full stack of machine learning infrastructure such as Databricks, MLflow, experiment tracking systems, and data warehouses, so that the pipeline it builds slots into whatever a team is already running.

The longer-horizon ambition is stated with more directness than one usually hears from founders: to provide every researcher, developer, and company on the planet with access to a Kaggle Grandmaster-level machine learning engineer, at a fraction of the cost.

There is a broader context to this ambition that the benchmark and the ETA case study together begin to sketch. The emergence of agentic AI systems capable of navigating complex technical pipelines represents a meaningful shift in what it means to build AI-powered products: not just generating code snippets, but running experiments, evaluating outcomes, and iterating toward a goal.

Tools like Claude Code or Cursor have made this landscape familiar to software engineers. Neo is making a similar argument to machine learning engineers and, more interestingly, to domain experts who are not engineers at all.

Saurabh’s protein folding example of integrating physical principles he already understands with machine learning architecture he wouldn’t ordinarily know how to build is perhaps the sharpest illustration of what Neo is reaching for.

Not the automation of machine learning engineering, exactly. Something more like the democratization of machine learning engineering’s output, with the understanding that the two are not the same thing.

Whether that distinction holds in practice – whether the cognitive commons is preserved or quietly drained – may be the most important question the next few years will answer. Neo has at least built the question into its architecture. That is not nothing.