Cloudera, Hortonworks, and how they are progressing in their Hadoop security agenda

Back in July 2014, Gigaom published a research note on Hadoop security written by me. As there seems to be rapid progress in this domain, in the last few days there have been a couple of related developments that call for an update on the picture drawn in that report.

As was noted in the report, both Cloudera and Hortonworks made some security-related acquisitions in order to enable them to expand their offering, at roughly the same time (end of 2nd quarter of 2014). Cloudera acquired Gazzang, a company offering a solution for data encryption in Hadoop. Hortonworks acquired XA Secure, a company offering an integrated security solution for Hadoop including role-based access control, audit trails and data encryption.

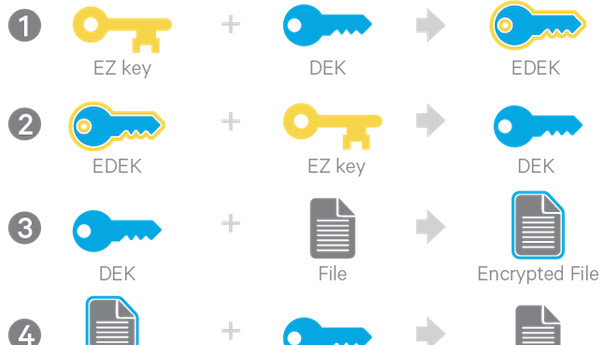

Cloudera just announced that they have now added data encryption for HDFS both for data at rest and in motion in their latest release of CDH 5.3. Although it has not been explicitly mentioned by Cloudera, it looks like this functionality was based on Gazzang code, perhaps with some contribution from Rhino too. Rhino is an open source project backed by Intel that also focuses on data encryption. Cloudera and Intel are strategic partners, with Intel having invested $740 million to buy an 18% share in Cloudera.

Hortonworks plan for Hadoop security. Source: Hortonworks.Hortonworks on the other hand announced in December 2014 a new version (0.4.0) of the Apache Ranger project that they back. Apache Ranger is an incubator project that Hortonworks aims to use in order to integrate and open-source XA Secure functionality. Hortonworks policy on security is based on three pillars: Comprehensive Security, Central Administration and Consistent Integration. According to them, Apache Ranger now offers a centralized security framework to manage fine-grained access control over Hadoop data access components. Security administrators can also use Apache Ranger to manage audit tracking and policy analytics.

What Apache Ranger does not seem to offer at the moment however is data encryption, so it will be interesting to see if and how the latest additions from Cloudera and Hortonworks can be combined to work harmoniously in the main Apache Hadoop distribution. At the moment, it looks like a patchwork, but let’s hope that consistent integration will be able to deliver on its promise.